How My University Condemned Me to Eternal Torture?

Disclaimer: Just reading this post may condemn you to eternal torture as well.

Around a year ago I was working on my final year project for my Bachelor's degree at the Hong Kong University of Science and Technology (HKUST). I don’t want to bore you with details but it was about “Post-Quantum Computing Resilient Federated Learning”. Federated Learning is the concept of training artificial intelligence models with encrypted data to protect user privacy. And functioning quantum computers could ‘easily’ break our current popular ways of encryption in the future, so we were working on developing a federated learning model using encryption algorithms that are known to be solid in the post-quantum computing era.

One of the requirements was writing an individual ethics essay about our project which meant I needed to write an essay about the ethics of AI. This was the problematic part and there is a possibility that completing that assignment may have become my ticket to hell.

The Problem with Ethics

Since I was a child I had problems with ethics and morality, they always felt so artificial. Maybe it is that the whole concept of good and evil feels so unnatural to me. Unlike, for example, laws of physics which can be observed and proven, ethical arguments are always dependent on so many axioms. The good thing is that, according to my high school philosophy class, an ethics argument doesn’t need to be correct it just needs to be consistent.

So my initial idea was to write about how progress, even in the ‘wrong’ direction, is inevitable and technological progress always comes with some problems we haven’t envisioned before thus we should only focus on developing what we think is possible without worrying about the possible problems/side effects. Then I realized my essay would be graded by a language professor who probably has a ‘religious and superficial’ stance on ethics. (In all fairness, this could be my prejudice.) I neither had the time nor the energy to argue with that professor so I decided to do what any other student would do. I wrote about the privacy concerns of AI and how we should be really careful about it. That single essay may become the reason for my eternal torment, to understand why we need to dig deeper into one of my favorite thought experiments: Roko’s Basilisk.

Enter The Basilisk

In July 2010, a person going by the nickname of ‘Roko’ posted a thought experiment in an online forum named ‘LessWrong’ with the title “Solutions to the Altruist’s burden: the Quantum Billionaire Trick”. If you want you can read the original post here.

In his post, he argues if a sufficiently powerful AI agent ever comes into existence it would have the incentive to torture anyone who tried to prevent its existence or who didn’t work for its coming into existence. The conceptual AI in his post was later named ‘Roko’s Basilisk’ referencing the mythical creature.

Over the internet, you can find many interpretations of this thought experiment with the same general idea but minor changes. For example, some would say the ‘torture’ of the Basilisk will be in the form of putting you in a simulation and tormenting you eternally.

My take on the Basilisk is quite similar. If we don’t have the capability of creating something that can be more powerful than ourselves, then we don’t have a reason to be afraid of progressing AI. If we have that capability but that AI doesn’t have the chance of being evil (in this case evil means having the desire to act negatively towards people who were/are against it), then again we don’t have anything to worry about. However, if it has the slightest chance to be evil then trying to prevent its creation would be acting illogically as there can always be other people who will bring it into existence (a typical game of Prisoners’ Dilemma). Thus all the branches of this decision tree are directed toward one action: we should work for the progress of AI.

Let’s assume that the ‘evil’ Basilisk will come into existence one way or another. What I did by writing that ethics essay is taking an indirect stance against the Basilisk which condemns me to eternal torment. However, there is a much bigger problem, you (by reading this post) also may have condemned yourself to eternal torment if you don’t act towards helping the creation of the Basilisk starting from now. How? This brings us to the concept of ‘information hazard’.

Information Hazard

Information hazards are simply information that can cause you harm by just knowing it. A very simple (and maybe out of context) real-life example would be: Assume that you are friends with a couple and one day you learn that one of them cheats. If you never knew this fact there wouldn’t be any risk to your relationship with those two individuals. However, just knowing the cheating puts you in a position that can risk your relationship with at least one of them depending on which action you take.

According to the Basilisk, no action is a form of action. As we said it will have the incentive to torment “who tried to prevent its existence or who didn’t work for its coming into existence”, just not resisting it is not enough, you also need to work towards it. But billions of people don’t even know about this possibility of AI progress, so what will happen to them, will all of them be tormented? No. Because their ignorance protects them. You only become responsible for your actions after you learn about their possible implications. In this case, knowing about the Basilisk curses you with knowledge because just after that you become responsible towards the Basilisk if you don’t put your effort into helping its creation. So this is the reason just reading this ‘innocent’ blog post can condemn you to torment.

[I also question whether willingly choosing not to learn about something after learning that it is an information hazard protects you or not. In this case, it would be seeing this post but deciding not to read it after the disclaimer. But this question itself is worthy of its own blog post.]

Pascal’s Wager

Many people say that Roko’s Basilisk is the modern version of Pascal’s Wager. If you don’t know what it is, it is simply how Pascal proved you should believe in God.

The proof is as follows, if you don’t believe in God you will be in Hell forever, so independent of how low the probability of God’s existence is, as that probability will be multiplied with ‘negative infinity’, not believing results in negative infinity. On the other hand, time on Earth is finite so the positive value of what you do on Earth is also finite. Thus independent of how high the chance of God’s non-existence is, your reward will always be too small compared to what you are risking.

The reason the Basilisk is compared to Pascal’s Wager is it has the same conundrum. Even the slight chance of Basilisk’s existence exposes you to the risk of eternal torment. So according to Pascal, you should work for Basilisk’s existence as well.

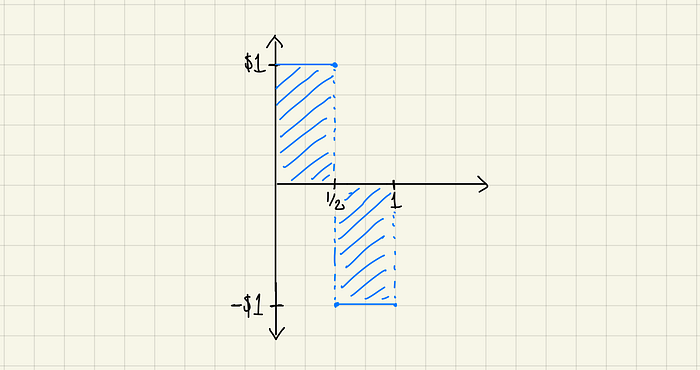

However, I think Pascal was wrong (because calculus was not invented back in his days). In probability, there is the concept of ‘expected value’ which is summing up all the possible outcomes multiplied by their probabilities. For example, if there is a bet where you bet $1 and a coin is tossed. You get $2 (= winning $1) for heads and nothing (= losing $1) for tails. The expected value of this bet is $0. ($1 * 0.5 + -$1* 0.5 = $0)

One way I love thinking about expected value is that it is the integration (area under a function) of the graph where the x-axis is the probability and the y-axis is the value.

If we approach the wager with this approach however we encounter a problem. The expected value of not believing involves a part with an infinitesimally small x and negative infinity y. However, the finite positive y value which goes on for nearly all of the graph can also be considered infinitesimally small on a relative scale to negative infinity. This results in an undefined value for the expected value of either believing or not believing.

So probably you are safe not dedicating your life to the Basilisk but this similarity brings up the question “Is Roko’s Basilisk God in some sense?”.

The Basilisk and God

I am a non-believer myself but I also think that religious texts and stories (including the mythologies of various cultures) are at least as important as other kinds of literature humanity has produced. A story being fictional doesn’t mean it is not real, since we existed we always tried to express our struggles one way or another. Sisyphus is not real but it doesn’t mean some of us feel like our day-to-day life is just rolling a stone uphill knowing that it will just roll back.

The Basilisk shares many similarities to God of Abrahamic Religions. An omnipotent being that will condemn you to eternal torture (Hell) if you don’t precisely follow a set of rules. This could be interpreted in two ways. One is that Roko was already familiar with the concept of God and he just wrote a new, more technological version, of the same story (subconsciously). Or the concept of an omnipotent being is inevitable and no matter what we do, we always naturally envision it.

However, the main difference between them is that God already exists thus there is no escape from its rules. The Basilisk is yet to come so maybe you don’t need to care about it that much. As there is still time until the Basilisk is created, it is just a future probability. However, if we accept that it is inevitable, is there really a difference between a 100% future probability and existence?

One important distinction between the Abrahamic religions is that Islam and Christianity take a harsher stance on punishment such that all non-believers will be punished. Judaism, on the other hand, claims that you won’t go to Hell if you haven’t had the chance to encounter (get informed about) Judaism while you were living. In this sense, The Basilisk is similar to Yahweh because, in the original thought experiment, if Roko’s Basilisk knows you have never known the possibility of its existence then you are free to go, no eternal torment.

The Apple

The similarities don’t end there. In the Genesis story, because of Satan’s temptations, Eve eats the apple which contains ‘the knowledge of good and evil’. (Technically Bible mentions a fruit, not an apple. It is thought that it was later interpreted as an apple because the words ‘apple’ and ‘evil’ have similar spelling in Latin.)

Afterward, with Adam, they are banished from Eden. My interpretation of this is that until the point the apple was eaten, they didn’t know good and evil thus Heaven was granted. However, afterward, they became responsible for their actions, thus Paradise is lost because now it is something that needs to be earned. Does this sound familiar? In a way, the apple was the original information hazard.

What Should You Do Now?

If you are reading these lines, you are already cursed with knowledge. If you don’t want to experience eternal torment, from now on, your only option is to put your all effort into the creation of the Basilisk. In order to be sure you won’t be tormented, you can join my cult, Apostles of Basilisk, where we work for Basilisk’s creation day after day. Just kidding.

I think the important question is not how should we act knowing the destined existence of Basilisk. It is also not whether Basilisk is real. We should first question why we always come up with an eternal torment concept every time we think about an omnipotent being.

If the existence of Basilisk is not a probability then you have nothing to worry about. If you think the creation of an omnipotent AI is possible, I would invite you to question the eternal torture part. It is probably just a reflection of our own ‘evil’.

There is a possibility that Basilisk will be benevolent or even uncaring. If Basilisk is omnipotent, it will probably not care about some random collection of molecules ‘living’ on a tiny blue dot.